The next big breakthrough in robotics, experts say, will come when androids, imbued with social intelligence, can collaborate, improvise, and respond creatively to nearly any set of instructions. But for this to happen, engineers will need to develop artificial-intelligence systems with a modicum of self-awareness. Only then will a robot be able to recognize that another machine, or a human, may have a perspective on the world that is different from its own.

Now a team of Columbia engineers led by mechanical engineer Hod Lipson, computer scientist Carl Vondrick, and doctoral candidate in computer science Boyuan Chen have taken a significant step toward this goal. They say they have designed a robot that displays a “glimmer of empathy.”

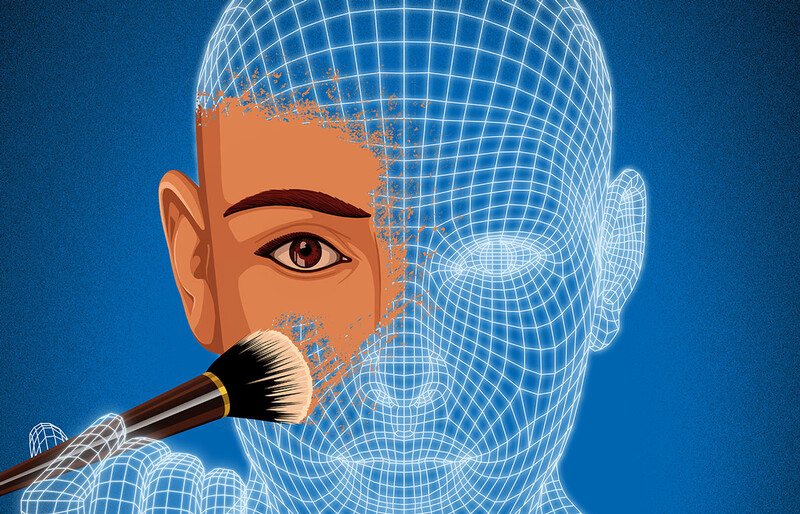

The Columbia team’s new machine doesn’t look like much, and its talents are purely cerebral. Little more than a video camera attached to a powerful computer, it is designed to observe its surroundings and offer predictions (in the form of rudimentary drawings) about what it expects to see a few seconds in the future. To make those predictions, it uses an artificial-intelligence technique called deep learning, which mimics how the human brain continually integrates new information through observation and repetition. The Columbia engineers did not preprogram the robot with any information about the world or its surroundings, preferring to let it form its own impressions over time.

The team then conducted a series of experiments that involved the robot observing, from an elevated perch in the engineering school’s Creative Machines Lab, a small Segway-like robot zipping around on the floor beneath it. The observing robot quickly figured out what its fellow was up to, accurately surmising that it was chasing green dots that periodically appeared on the floor. It correctly predicted the paths the other machine would take around various obstacles to reach the dots. But things got really interesting when the green dots began to appear in places where the robot on the floor could not see them, such as directly behind barriers. After witnessing this several times, the observing robot, whose bird’s-eye view of the lab enabled it to see every dot, predicted that the mobile robot would fail to pursue them.

“That’s the crucial moment,” says Chen. “It’s when the observing robot essentially says to itself, ‘OK, although I can see that dot right now, the machine down there cannot see it.’”

The Columbia researchers, whose findings appear in the journal Nature Scientific Reports, are not claiming that their robot is actually self-aware. But they do hypothesize that the computational processes by which it spots patterns of movement in its field of vision could represent the algorithmic underpinnings of self-awareness, since its deep-learning architecture is able to generate insights that can help it recognize another entity. “The ability of a machine to predict actions and plans of other agents without preprogramming may shed light on the origins of social intelligence,” the authors write.

By developing their technology further and by expanding its interpretive abilities to include other types of sensory stimuli, the Columbia engineers hope to eventually create systems that will enable robots to cooperate. “You could have assembly-line robots that sense when other robots are struggling to complete a task and go over to help them. Or you could have self-aware cars that are able to communicate with each other and avoid accidents,” says Chen. “The possibilities are endless.”